Development

Technical Research Prototyping

P1 – Python Web Framework

Prototype Purpose

Starting the initial prototyping stage of this project, it was necessary to investigate and select a suitable Python web framework that aligned with the requirements of Integrated Workforce Management Platform. One essential feature required for the core functionality of the project is the ability to handle concurrent/simultaneous actions throughout the platform due to its account-based usage and constant access requirements. To deliver this feature there is a requirement for an asynchronous capable web framework, meaning its ability to handle concurrent I/O operations. These I/O operations, classified as operations reading/writing data outside of its CPU, include database queries, authentication checks, and background schedule updates – all features that would have to be performed regularly and possibly simultaneously in a large-scale deployment. Contrastingly, a synchronous framework would heavily struggle with the demanding constant usage, as execution – i.e. the carrying out of tasks – would be blocked while waiting for a currently occurring operation to complete, reducing Integrated Workforce Management Platform’s ability to handle many simultaneous requests at once. Furthermore, due to the projects nature as a web-based system, structured and predictable communication between the client and server side is essential. One specific method of assuring these predictable communications is making sure that the chosen web framework can be RESTful, with standardised HTTP methods to represent actions on resources. Furthermore, by assuring a RESTful approach, the concept of statelessness is also a requirement, meaning each request includes all authorization and context – disregarding the need for a server-side session state. This further improves concurrency of users without significant strain on the hardware due to less information being stored – a feature perfect for Integrated Workforce Management Platform. While the implementation of such standards falls on the developer, the capability must be evident in the framework to be implemented effectively.

Method Identification

Therefore, I took to researching different frameworks, some of which I had used in the past and some of which were new to me. Several potential frameworks were considered based on my prior experience and use, including Flask, Django, FastAPI, and Sanic. However, only one framework exhibited the ideal combination of ease of use, flexibility and performance capabilities required for this project. The following section will identify and evaluate the features of each shortlisted framework in the justification of the final selection.

While Flask is lightweight, beginner-friendly, has a very low learning curve, and is highly suitable for small scale applications, it is primarily synchronous in nature. As explained above, causing significant issues in relation to simultaneous execution of requests and actions on the webapp. Although asynchronous behaviour can be added or emulated using its highly-extensive extensions – this unnecessary layer of abstraction and complication significantly reduces the ease of use and increases the timescale needed in its configuration, making it a non-viable option for this project.

Due to Flask not being a fit, the investigation moved onto Django. Django is a well-known, widely used comprehensive full stack web framework, offering many built in features such as authentication, database, and admin systems, potentially accelerating development times and reducing unnecessary programming. Further research into the framework suggests significant constraints as a result of deviations from standards which would not be known to me, the sole developer, due to lack of experience in said framework which is also known to have a steep learning curve. Furthermore, this enforced structural nature of the framework also reduces flexibility in the creation of a REST-based API, placing it against one of the predefined requirements I set out in search of a web framework. Finally, while newer versions of Django do have asynchronous support, a large portion of its extensions and above described built in features are not combatable, highly reducing efficiency and concurrency benefits – making it an evidently less appropriate option for this project.

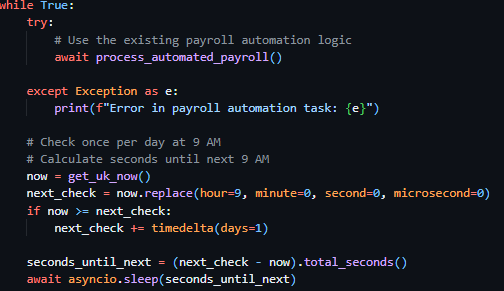

As a result of Django’s limitations FastAPI was considered, an asynchronous Python web framework designed specifically for high performance and RESTful API development, providing native solely asynchronous support, request validation and ease of development through the use of the newly released Python type hints feature – allowing the assignment of data types and structures to variables, creating visible and traceable warnings in the text editor as a result of mistakes. These features call for FastAPI to be a strong candidate for Integrated Workforce Management Platform, seemingly implementing all requirements detailed above perfectly. However, the framework has a significant focus on enforcing strict definitions and extensive documentation requirements, which, while highly beneficial in a corporate, team-based environment, calls for a highly steep learning curve at adoption and additional complexity during development sessions. Due to this project’s requirements, greater control over request handling and minimal abstraction is preferred.

Due to this, the focus of my investigation moved onto Sanic, a lightweight, asynchronous Python web framework “Built from the ground up with speed and scalability as a main concern” Unlike Flask and Django, Sanic is natively asynchronous without the need for the addition of extensions or additional layers to handle concurrent operations, adhering to the requirements of a asynchronous framework. The framework also provides full control over request handling, unlike FastAPI and Django – which enforce strict developmental rules, and excessive documentation requirements – allowing for RESTful implementation without enforced constraints. This level of flexibility is greatly valuable, due to the need of authentication management, operation scheduling and database interactions, all of which would be made much more complex in a strictly enforced environment. Additionally, Sanic has a “highly intuitive API with smart defaults” which is highly inspired by one of the most popular frameworks, Flask, a framework I have significant prior experience with, reducing the learning curve while still benefiting from asynchronous execution. Finally, the framework has useful built-in development tools such as automatic reload on file changes – which will evidently be useful in the development of the frontend - and clear debugging error pages which further reduces development problems, streamlining development and testing workflows.

Programmed Implementation

To solidify my understanding of Sanic, and assure myself of its suitability for Integrated Workforce Management Platform, I wrote a prototype RESTful API endpoint to demonstrate its capabilities. This initial prototype was minimal, focusing only on validating Sanic’s core use cases and suitability for the project. Establishing this, was a significant requirement, ensuring that further prototypes had a functional and usable base to build upon. Allowing development risk to be reduced and securing the base decision for subsequent stages of prototyping and development.

from sanic import Sanic

from sanic.response import json

from sanic.request import Request

app = Sanic("ExampleApp")

@app.get("/hello")

async def hello(request: Request):

return json({"message": "Hello World"})

if __name__ == "__main__":

app.run(host="localhost", port=8000, dev=True)

This developed prototype demonstrates exactly how Sanic handles HTTP requests (in this case a GET request hosted at the ‘/hello’ endpoint) asynchronously as seen by the use of the async keyword before the function definition, ensuring that the server is not blocked when handling requests. Furthermore, the use of decorator-based routing - @app.get(“hello”) – forces endpoints to be clearly defined and supporting a RESTful structure – in which resources are accessed through standard HTTP methods. Additionally, the frameworks’ ability to run the same server for use in development and production environments – demonstrated through its dev=True parameter, which enables both the debugging UI and automatic file reloading, within the app.run API – further reduces development complexity. Finally, its optional inclusion of Python’s modern type hints through its accessible importable types – evident in the importation from sanic.request import Request and its subsequent use in the route function definition parameters async def hello(request: Request): - increases developer ease of use through text editor integration and readability, highly beneficial when maintaining a large codebase independently.

As a result of all prior outlined requirements being met, developer ease of use being prioritised, and its balance between useability and structure. Sanic was chosen as the most appropriate framework for the final implementation of Integrated Workforce Management Platform.

P2 – Client-Server Architecture

Prototype Purpose

For the second prototype, the main purpose was to investigate different architectures and how applicable they would be in the implementation of Integrated Workforce Management Platform. A justified decision is crucial in ensuring the project is scalable, can support concurrent users and allow for modular and maintainable development practices throughout the project.

Method Identification

There are many possible options in the development of an application, with local-only approaches – meaning all data would be stored on the user’s machine – and monolithic single server applications meaning all components of the application were to reside together running as one process, rather than the frontend and backend being developed and deployed independently.

Both these options can be seen to be inappropriate for Integrated Workforce Management Platform, as in the case of a local only approach – multi-user implementation would be inherently impossible due to its lack of a central convergence of data – and while a monolithic application would be considered to be easier initially, the large scope of the Integrated Workforce Management Platform’s capability, maintaining and extending the codebase throughout development iterations would become increasingly difficult as the codebase increased in scale.

Considering these limitations, a client-server approach was chosen for Integrated Workforce Management Platform. This allows for the frontend and backend to be run and developed independently of each other and simplify deployment and scaling through its architecture allowing the server to manage centralised resources (in this case through the backend) efficiently. This also assures security due to all data, logic and computation being performed and held on the server side, without risk of alteration on the aforementioned client side – reducing the possibility of the doctoring of schedules and hours worked, preventing inaccurate payroll reports. Furthermore, this architecture inherently supports separate testing of each area of the application, with the client side and server side being separate, reducing developer testing workload. To confirm the implementation of the approach, a minimal exemplar prototype was developed, showcasing the interactions between the backend framework and a mock frontend example.

Programmed Implementation

This prototype and example output, shown below, consists of a backend endpoint – written in Sanic, the chosen framework in Prototype 1 – that returned mock data and a frontend page – written in html and JS – that requested – through the JS fetch API – and displayed this data. This demonstrates the client, and server would be capable of communicating correctly, validating the use of this client-server approach, improving my understanding of this architecture and showing that it can handle basic request-response cycles as expected. A process essential to the core base of Integrated Workforce Management Platform.

from sanic import Sanic

from sanic.response import json

from sanic.request import Request

app = Sanic("ExampleApp")

@app.get("/hello")

async def hello(request: Request):

return json({"message": "Hello World"})

if __name__ == "__main__":

app.run(host="localhost", port=8000, dev=True)

Above is the same minimal implementation of a Sanic development server presenting an exemplar ‘/hello’ route hosted on localhost:8000 ready for requests by the client.

Following the server-side code, below is a minimal example of a client-side implementation written in pure HTML and JavaScript, which would be able to be ran on any modern web browser.

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Client-Server Prototype</title>

</head>

<body>

<h1>Server Message:</h1>

<p id="message">Loading...</p>

<script>

// Fetch data from the backend endpoint

fetch('http://localhost:8000/hello')

.then(response => response.json())

.then(data => {

document.getElementById('message').textContent = data.message;

})

.catch(error => {

console.error('Error fetching data:', error);

document.getElementById('message').textContent = "Failed to load message.";

});

</script>

</body>

</html>

This prototype above, requests data from the backend route /hello hosted at localhost:8000, this fetch request is facilitated by JavaScript’s Fetch API – as seen by this line fetch('http://localhost:8000/hello'). It then proceeds to identify and single out the message field provided by the JSON response of the backend and replaces a pre-identified field in the HTML body of the webpage with said message – this is evident in this line document.getElementById('message').textContent = data.message;.

Furthermore, though my inclusion of error handling in the client-side prototype, failed server requests are demonstrated how to be handled effectively in response to connection issues – ensuring issues in a real-world implementation do not break the frontend. This error handling can be seen to be implemented via the catch clause inside the fetch function, shown isolated below:

.catch(error => {

console.error('Error fetching data:', error);

document.getElementById('message').textContent = "Failed to load message.";

});

After catching said error, it handles it gracefully, pushing an error message to the console and replacing the <p id="message">Loading...</p> text field with an error message. Notifying the user and preventing total usability of the webpage.

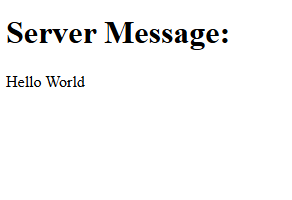

Figure 1 aside shows the output of the above-mentioned client-side prototype, where the message “Hello World” returned from the server is displayed in the browser.

Together, these backend and frontend examples perfectly capture and exemplify the successful implementation of a client-server system, fetching data from the backend and displaying it dynamically directly in a stand-alone frontend system. This proves the client server architecture is suitable for Integrated Workforce Management Platform, supporting security and the system’s ability to scale with additional functionality in subsequent prototypes.

P3 – Frontend Framework

Prototype Purpose

The purpose of this third prototype was to understand and investigate the suitability of different frontend approaches for the implementation of Integrated Workforce Management Platform. The selected frontend framework must adhere to several requirements identified because of the various core features of Integrated Workforce Management Platform and their resultant needs in implementation. Due to the systems nature as an account-based platform, the frontend must be capable of managing; user state efficiently, frequent data requests, regular data updates and dynamic content rending – the process of requests being done in the background (known technically as asynchronous data rendering) without page reloads and visible discontinuity. This behaviour is important to maintain user flow and discourage abandoning of tasks due to unresponsiveness and perceived system latency.

Method Identification

Several frontend approaches were considered during this investigation, ranging from static implementations through the use of vanilla HTML and JavaScript to modern component-based frameworks such as Angular or ReactJS. Each successive option was evaluated in relation to its scalability, responsiveness, maintainability and ability to integrate with a RESTful backend implementation – as seen to be necessary in Prototype 1.

One possible approach considered for the frontend implementation was the use of plain HTML and JavaScript without the inclusion of any libraries or frontend frameworks. This approach can be considered to be the most lightweight implementation option. As a result of the lack of need for external dependencies or build tools, initial setup complexity was heavily reduced – a significant positive for this project due to its nature of being developed by a singular developer. Furthermore, it provides full native control over the DOM (Document Object Model – the interface that represents web pages as trees of dynamic objects allowing scripts to modify it), covering all possible functionality directly through native browser implementation, reducing significantly the possibility of issues that could be introduced through an intermediary framework. Despite these numerous advantages, various significant limitations were identified. As the system increases in complexity, managing a large file structure using only vanilla technologies becomes increasingly difficult, resulting in repeated use of code rather than reusable components as is standard in various libraries, culminating in large file sizes. Furthermore, plain JavaScript provides no mechanism for managing user state and data on an application scale, other than native technologies such as cookies, making the handling of authentication status and coordination of frequent backend data updates much more complex. Therefore, dynamic content rendering would become inherently more complex, due to its manual implementation being a requirement, causing the further repetition of code and increasing the likelihood of logical errors. Additionally, while RESTful communication between the client and server is technically a possibility when using vanilla solutions, all request handling and error management would need to be directly implemented on a per request basis – reducing maintainability and scalability over time, two of the main requirements for a solution set out initially. For these aforementioned reasons, the use of plain HTML and JavaScript was deemed unsuitable for the frontend implementation of Integrated Workforce Management Platform.

Following this, my focus moved to JavaScript libraries such as jQuery, due to its simplification and bundling of common operations used in web development. While these features can be initially considered to be useful, its helper methods – such as those of AJAX requests – and its historical well supported and widely used nature, its focus on DOM manipulation as its core functionality can lead to further issues with code repetition as a result of its inability to separate repetitive reusable operations from explicit edits of the UI. Building on this, its lack of structured application state management inherently makes its unsuitable for complex user authentication and data management flows, which has previously been identified as a fundamental core functionality of Integrated Workforce Management Platform. Furthermore, the majority of the library’s functionality has been made redundant, with most features such as the AJAX request wrappers, being directly present in versions of vanilla JavaScript from 2015 onwards. Therefore, jQuery provides little to no benefit, being widely considered a legacy technology and an unnecessary external dependency with minimal optimisation, increasing page load size and as a result reducing performance statistics. Proving scalability, maintainability and optimisation to be further areas in which it is lacking; all features that are essential to the core functionality of Integrated Workforce Management Platform. As such jQuery is unsuitable for the project.

The third option considered was Angular, which is a fully featured frontend framework (the first framework on this list) including built in tools such as routing functionality to manage the pages internally without needing to maintain multiple servable documents (html files); a point of difference to the previous considered options. Angular also has built in request handling with HTTP client services considered central to the framework’s use cases proving its validity in an architecture based on client server request response; a factor previously outlined as essential for successful delivery of Integrated Workforce Management Platform. These factors make Angular a strong contender as the frontend framework for the project further reinforced by the fact that it improves code readability and error traceability through its enforcement of the use of the JavaScript superset, Typescript, and encourages clear separation of concerns throughout the frontend projects development through its isolation of visual components (the User Interface) and through services (methods that provide functionality to the components). This can be seen as a clear positive as it simplifies development as a whole, dividing two complex parts of frontend development, the implementation of the User Interface visually, and the communication with the backend (data, authentication and user state). Finally, Angular contains built in reactive programming capabilities, mitigating issues of repetitive implementation when dealing with exceptions or errors, through its feature “Observables”. This feature allows for the efficient handling of asynchronous requests on an application wide scale, a point of differentiation and a requirement of this project.

Despite these positives, Angular is more suited for use in large scale and enterprise development, with its comprehensive feature set and enforced structured nature (much of which is described above) in contrast being barriers to flexibility and development timescale in a single developer project such as Integrated Workforce Management Platform. This is evident in its enforced use of TypeScript which is a superset of JavaScript and has significant architectural differences in its syntax. When combined with the extensive feature set mentioned above, this creates a fundamental barrier to entry of the Framework – with the overhead of learning an entire significantly different superset, which many consider an entirely separate language, and the sheer number of architectural concepts and features to familiarise with and adhere to. Therefore, I considered this fundamental steep learning curve to be outside the scope of this project preferring to instead identify an option with both the ease of use of plain HTML and CSS combined with the separation of concerns prevalent in Angular.

My final option considered was ReactJS, a modern frontend JavaScript library designed for building dynamic and highly responsive user interfaces. Combining the structural advantages of frameworks such as Angular with the reduced setup complexity associated with other lightweight frontend solutions. Similarly to Angular, react benefits from a component-based architecture, allowing the frontend user interface to be divided into clearly separated, reusable components. This approach significantly improves maintainability and scalability, as individual components can be developed and tested individually. Furthermore, it significantly improves project management, allowing for the easy organisation and management of different areas of the project – a feature highly essential to Integrated Workforce Management Platform due to it being written by one sole developer, with significant scope constraints.

React additionally implements state driven rendering through the use of a virtual DOM (a lightweight replication of the DOM for easier access in UI manipulation), meaning, as data changes throughout the application, only the specific components using newly refreshed data get reloaded (or rather re-rendered). This directly results in a smoother user experience in the application and further increases performance when compared to manual DOM manipulation approaches – with significantly less strain on the browser. These benefits would be particularly prevalent in applications requiring frequent application wide data updates, such as Integrated Workforce Management Platform – allowing for schedule updates and other data changes to take effect without delay. React’s reliance on asynchronous data fetching via capable native browser APIs allows for clean and efficient integration with RESTful backend implementations, aligning directly with the architecture explored in Prototype 1.

While React does require the use of external libraries to implement certain functionalities such as routing or advanced state management, this was a significant advantage rather than a limitation. Allowing the selection of libraries to be based on the immediate requirements of the project at hand, rather than the provision of a large, predefined toolset such as is done in Angular, further improves performance due to the lack of unnecessary overhead tools and enabling the direct evaluation of specific libraries impact and effect on performance by the developer – allowing for justified and evaluative decisions prior to implementation. Furthermore, this evident flexibility significantly lowers the barrier to entry in the use of React, requiring only fundamental and essential technologies to be learnt and implemented.

Therefore, this flexibility, ease of RESTful integration, efficient state handling and a comparatively low barrier to entry, makes React the ideal choice and highly suitable for the frontend implementation of Integrated Workforce Management Platform. To confirm and widen my understanding of this technology, I chose to create two programmatic implementations, the first exampling its capabilities in its state-driven rendering and component-based user interfaces, and the second exploring its asynchronous data fetching capabilities and RESTful integration with the backend design chosen in Prototype 1.

Programmed Implementation

The first example, identified below, demonstrates React’s component-based architecture – where the user interface can be divided into minimal components, encapsulating functionality with specific areas of the UI – a feature previously described to be essential to the management of such a large complex project such as Integrated Workforce Management Platform.

import { useState } from "react";

function ClockInExampleComponent() {

const [clockedIn, setClockedIn] = useState(false);

function toggleClockedIn() {

setClockedIn(prevClockedIn => !prevClockedIn);

}

return (

<div>

<h2>User Clocked In Status</h2>

<p>Current status: {clockedIn ? "Clocked In" : "Clocked Out"}</p>

<button onClick={toggleClockedIn}>

{clockedIn ? "Clock Out" : "Clock In"}

</button>

</div>

);

}

export default ClockInExampleComponent;

In the above example, the state of a user’s status – being either clocked in or out – can be seen to be managed through the useState hook, imported into the component for use through the line import { useState } from "react";. This allows data to be stored and updated without direct DOM manipulation. When the data’s value changes, React automatically re-renders only the effected component – in this case to switch the buttons label {clockedIn ? "Clock Out" : "Clock In"} and to switch the text shown in the ‘current status’ paragraph element {clockedIn ? "Clocked In" : "Clocked Out"}. Furthermore, these two implementations of text switching between different values, are an example of an additional React feature – inline JSX – allowing for logical decision making to take place inline, alongside traditional HTML tags. In this case the button element triggers the state change through the function toggleClockedIn attributed to the button through the React event onClick. This function, as seen in the line setClockedIn(prevClockedIn => !prevClockedIn); updates the state using the setClockedIn function defined alongside the state data above, a further React feature, through its use of the ! operator, producing the opposite Boolean value to that of the current saved state.

This approach, removes the need for explicit DOM queries and updates, exemplifying the previously explained reduction in code repetition and the likelihood of minor errors. Figure 2 demonstrates this behaviour at runtime, showing the component responding dynamically to state changes during user interaction.

This second programmed example demonstrates React’s ability to integrate with RESTful backend services using asynchronous HTTP requests, validating the decision making in the frontend library of choice through its integration with the client-server architecture identified in Prototype 2 and the exact backend programmed implementation utilised in Prototype 1.

import { useEffect, useState } from "react";

function BackendTest() {

const [backendMessage, setBackendMessage] = useState(null);

const [loading, setLoading] = useState(true);

const [serverError, setServerError] = useState(false);

useEffect(() => {

fetch("http://localhost:8000/hello")

.then(response => response.json())

.then(data => {

setBackendMessage(data.message);

setLoading(false);

setServerError(false);

})

.catch(error => {

console.error("Error fetching server data:", error);

setLoading(false);

setServerError(true);

});

}, []);

if (loading) {

return <p>Loading server data...</p>;

}

return (

<div>

{serverError ? (

<p>Server request failed.</p>

) : (

<p>Message from server: {backendMessage}</p>

)}

</div>

);

}

export default BackendTest;

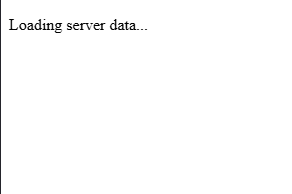

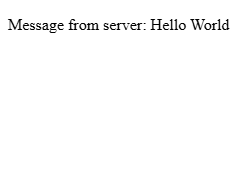

In this example, both useEffect and the previously covered useState are imported – as seen by this line import { useEffect, useState } from "react"; - with useState serving the same purpose as previously, and useEffect used to trigger a backend request when the component is first rendered. This prototype implementation mimics direct production implementations, which typically fetch initial data from the server at page load. This request for data from the server, located inside the useEffect hook, triggers an arrow function – as seen by this syntax () => {}, allowing the functionality of the hook to remain minorly abstracted. The request inside this arrow function, is made using JavaScript’s Fetch API – which is native to the browser, and can be seen to be fetching data from the backend endpoint () in an identical method to Prototype 2. There is multiple state variables defined via the previously discussed useState hook, managing various application states. The backendMessage state stores the message returned by the server, while the loading and serverError states are used to track the progress and outcome of the request, allowing the user interface to shift dynamically referencing the status of the request and its outcomes. While the request is in progress, the component leverages React’s conditional rendering, returning a loading message (as shown in figure 3a) based on the Boolean value of the loading state, ensuring the user receives immediate content, reducing perceived page load times, and preventing the display of incomplete data. On the result of a failed request, error handling logic contained in the catch block (.catch(error => {});) – a feature previously utilised in Prototype 2 – updates the serverError state, allowing the interface to render and display a clear failure message (as shown in figure 3c) rather than a lack of data or dysfunctionality of the application. Finally, on the common occurrence of a successful request, the message returned by the backed, stored in backendMessage, is displayed to the user (as shown in figure 3b) – as can be seen by this line <p>Message from server: {backendMessage}</p>, a further example of inline JSX through its use of state content in the UI component.

Figure 3 below presents the three previously discussed runtime states of the programmed example above, demonstrating the user interfaces capability in responding to loading, successful data retrieval and server error conditions.

This programmed example above highlights React’s support for asynchronous data fetching and conditional rendering, allowing the frontend to remain responsive and informative during rare cases of backend failure. It also further illustrates benefit of its separation of concerns, with the backend communication logic isolated within the effect hook and presentation logic handled through the JSX response – an implementation of this isolation in a much more efficient method compared with previously considered options.

As such the combination of both programmed examples and the prototype confirms that React is well suited to consuming RESTful APIs and maintaining a developer friendly and responsive user interface for Integrated Workforce Management Platform.

P4 – Database Integration

Prototype Purpose

The purpose of this fourth prototype was to investigate and programmatically demonstrate suitable database technologies and their theoretical implementations, building upon the previous prototypes, for the persistence of application data within Integrated Workforce Management Platform. As a result of the system’s account-based nature, the database solution was required to support structured data, reliable relationships between different data constructs and efficient querying – as to not slow down the usage of the application. Efficient querying being particularly important due to Integrated Workforce Management Platform’s inherent frequent requirement of data, with regular queries necessary on page loads to supply data such as employee schedules and user information. This prototype specifically focuses on the evaluation of effective integration of various persistent storage options, access methods and their subsequent integration with the predefined backend architecture identified in Prototype one.

Method Identification

Several database approaches were considered during this investigation, including relational (SQL based) and non-relational (NoSQL based) database models.

One option for persistent storage throughout the application is a NoSQL database, while these take many forms, their fundamental principles minimally differ. NoSQL databases, such as MongoDB, store data in flexible, non-predefined structures such as documents or key-value stores. This can be seen to be a highly beneficial factor, with their flexibility being significantly advantageous in situations where data-structures change frequently and data relationships are minimal. Additionally, NoSQL databases are often optimised and designed with performance and scalability in mind, with their schema-less nature removing the forced and predefined structures evident in SQL databases, allowing data to be written and read with fewer constraints.

However, as per the predefined requirements, Integrated Workforce Management Platform relies heavily on structured and relational data – such as hours worked and schedules being linked to specific users. With the implementation of such features in a NoSQL database being a significantly more difficult and manual task, requiring manual attribution between items of data at the application level rather than being implemented within the database itself, this would highly increase the already large scope of the project alongside its complexity, increasing the likelihood of logical errors. In which, when applied to this project, could surface as the misalignment of individual items of data – causing significant privacy issues, with Integrated Workforce Management Platform dealing with payment scales and personal information. For these reasons a NoSQL database was deemed unsuitable for this project, with the investigation moving to evaluate the efficacy of relational SQL based databases.

In contrast to NoSQL databases, their relational counterparts store data in clearly defined table structures, enforcing relationships through foreign keys and ensuring data integrity. These inherent design factors directly mitigate issues previously discussed, with the lack of manual implementation of relationships being highly beneficial in relation to this project, reducing developer workload and largely streamlining the development of the backend API. While NoSQL can be faster than relational SQL databases for certain unstructured workloads, the provision of strong consistency and their robust enforcement of standards significantly outweigh any possibility of a minimal performance decrease. Making SQL databases highly suitable for applications such as Integrated Workforce Management Platform, where accurate tracking and attribution of hours, schedules and sensitive user information are fundamental core features of the platform. Additionally, SQL’s structured nature and built-in relational capabilities significantly simplify querying, reporting and enforcing application wide rules, reducing the likelihood of data errors and improving maintainability over the long term. As a result, a relational SQL database was selected for the implementation of Integrated Workforce Management Platform.

With the selection of a relational SQL database for Integrated Workforce Management Platform, the next consideration was the method of interaction with the database itself, traditional approaches such as the use of raw SQL queries being one option, and the use of an ORM (Object Relational Mapping) library being the other. Given the large scale of this project and the inherent complexity of managing multiple related tables and ensuring consistent access throughout Integrated Workforce Management Platform, the use of an ORM was deemed the more effective and maintainable approach. ORMs reduce the developer burden of boilerplate SQL code required for common operations, enforce consistency of queries, access and table structure, and integrate significantly more seamlessly with the backend framework – often providing type hints enabling traceability throughout the code editor – reducing the likelihood of errors, such as leaving injection vulnerabilities, compared with writing raw SQL queries directly.

The following section evaluates three ORMs compatible with Sanic – Mayim, Tortoise-ORM and SQLAlchemy – to identify the most suitable solution for Integrated Workforce Management Platform.

The first ORM to be considered was Mayim, an SQL-first one-way solution. Due to its SQL first approach, the fine-tuning of queries for performance and precise behaviour is made simple, enabling the writing of queries inline or in separate .sql files. This unique to the implementation of an ORM facilities the full control over database queries, and can be highly beneficial for specific, performance-based queries. However, Mayim lacks the high-level abstraction of database interactions sought after during the solidification of the decision to choose an ORM over raw queries, producing significant limitations in relation to query structure and data management. For a project such as Integrated Workforce Management Platform, this ORM specifically fails to mitigate the additional manual workload associated with raw SQL queries and increases the risks of errors – due to the project requiring multiple interrelated tables, and reusable models. While Mayim can be considered a viable option for small-scale or highly custom applications in which queries must be optimised – its SQL first nature widely reduces its suitability for this project, due to its lack of a structured and maintainable approach to database interaction and management.

The second ORM to be considered was Tortoise-Orm, ‘a lightweight, async-native Object-Relational Mapper for Python’ . In comparison with Mayim, Tortoise-ORM provides a high-level of abstraction over database interactions, removing any need for boilerplate SQL code – enabling model-based interactions and development. Its asynchronous first nature, makes it a significantly compatible candidate for use with the Backend framework, Sanic, chosen in Prototype 1, providing the functionality needed to continue the concurrent request handling capabilities it showcases. Furthermore, Tortoise-ORM’s syntax can be considered to have a significantly minimal learning curve, with highly simple methods to define models, relationships and data querying. This paired with its inherent support for relational integrity – enforcing the use of primary and foreign keys, and its clear distinctions between different data relationships – initially makes it a highly viable candidate for use in Integrated Workforce Management Platform. Despite these numerous benefits, the ORM has significant limitations when deployed in a complex, large scope project such as Integrated Workforce Management Platform. One significant limitation being its severely limited documentation, with minimal amounts of advanced issues listed – causing debugging to require significantly more trial and error and external resource use compared to more popular solutions. This can cause significant issues because of the scope of the implementation required for Integrated Workforce Management Platform, making the discovery of niche issues almost certain. Furthermore, its limited integrations with existing libraries in the Python ecosystem could significantly restrict expandability in future iterations of the project. While Tortoise-ORM is well suited for small to medium asynchronous projects, the combination of limited documentation and reduced ecosystem support makes it a significantly less ideal solution for a large, single-developer lead project such as Integrated Workforce Management Platform – in which maintainability, organisation and flexibility are the foundation of requirements.

The final ORM considered was SQLAlchemy 1.4, a highly flexible, mature, and well known ORM widely used in Python development. SQLAlchemy, by design, provides a high level of abstraction for use in regular implementation, while retaining the ability of fine-grained SQL query control - allowing both rapid development through its ORM layer and specific query optimisation to occur based on the needs of the situation – an ideal balance between flexibility and control. This is highly beneficial to Integrated Workforce Management Platform, as it enforces the structure and organisation needed to manage a large project, while also presenting the developer with the capacity to control selected queries when needed – a level of flexibility sought after in a project of this size. Additionally, its support for robust relational models, including foreign keys, composite keys and relationships directly align with the structure requirements set out for Integrated Workforce Management Platform. Furthermore, because of its maturity in the ORM space (released in 2006), it has an extensive ecosystem boasting significant documentation breadth – a benefit lacking in other ORMs considered -, active community support through official forums – significantly reducing the risk of insurmountable errors occurring. Furthermore, with its new 1.4 release, its compatibility with asynchronous workflows integrates seamlessly with Sanic – allowing for concurrent operations a requirement set out previously for Integrated Workforce Management Platform. Its integration with modern python type hints increases maintainability and allows queries to be traced directly to models of tables – reducing errors associated with mistypes of raw SQL queries. SQLAlchemy’s main drawback is its significant learning curve, particularly when implementing asynchronous queries and advances features. However, due to prior experience with the ORM, its extensive documentation and active support channels can be seen to outweigh the singular drawback – with its combination of flexibility, structured relational support and asynchronous compatibility deeming it as the most suitable ORM for Integrated Workforce Management Platform, meeting all the projects requirements and its lack of drawbacks compared to other considered options.

Programmed Implementation

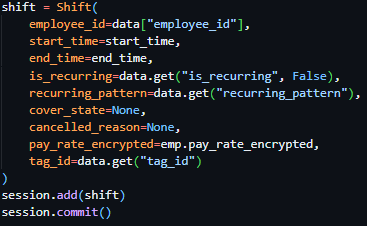

To further expand my knowledge, understand implementation and verify compatibility with existing solutions, I created a minimal programmed prototype – utilising Sanic as the backend web framework, as was decided in Prototype 1. This programmed implementation spans two files, database_init.py handling database setup, model definition, and asynchronous session management, and sanic_app.py defining the backend API endpoints for interacting with the database. The prototype demonstrates how Integrated Workforce Management Platform can persist structured user data, perform queries asynchronously, and respond to client requests efficiently, reflecting the core functionality expected in the final system.

"" database_init.py ""

from sqlalchemy.ext.asyncio import AsyncEngine, create_async_engine, AsyncSession

from sqlalchemy.orm import declarative_base, sessionmaker, relationship

from sqlalchemy import Column, Integer, String, Float

Base = declarative_base()

class User(Base):

__tablename__ = "users"

id = Column(Integer, primary_key=True)

name = Column(String(100), nullable=False)

email = Column(String(150), unique=True, nullable=False)

role = Column(String(50), nullable=False)

hours_worked = Column(Float, default=0.0)

# Async engine setup (Using SQLITE can be switched to any SQL database)

DATABASE_URL = "sqlite+aiosqlite:///./example.db"

engine: AsyncEngine = create_async_engine(DATABASE_URL, echo=True)

# Async session setup

async_session = sessionmaker(

engine, class_=AsyncSession, expire_on_commit=False

)

# Function to create tables

async def init_db():

async with engine.begin() as conn:

await conn.run_sync(Base.metadata.create_all)

In this file above, the general schema for the database user table is defined. The first key implementation of SQLAlchemy specific logic can be seen in the declaration of the base class with Base = declarative_base(), establishing the foundation for all subsequent models – effectively allowing Python classes to be interpreted by the ORM and directly mapped to SQL database tables. This directly enforces structure and consistency between all proceeding tables and reduces the manual boilerplate code significantly.

The User class (class User(Base):) uses the base variable in its class parameters, causing the User class to be a direct extension of the Base class – creating a users table with several columns: id, name, email, role, and hours_worked, with each column being generated from each class attribute when assigned the Column function. The Column function requires numerous parameters, similarly to that of a regular SQL column definition (id int PRIMARY_KEY),) allowing control over column data types through provided attributes (as seen by the import statement from sqlalchemy import Column, Integer, String, Float), and column rules as seen by nullable=False, unique=True, default=0.0 and primary_key=True. This allows the ORM to enforce data types not only on the database level, but also on an ORM level – reducing chance of data type incompatibility and showcasing SQLAlchemy’s ability to maintain relational integrity.

Following this, the asynchronous database connection is configured through create_async_engine(DATABASE_URL, echo=True). This directs SQLAlchemy to the database of choice, in this case being an SQLite database (sqlite+aiosqlite:///./cascade.db). Which is then subsequently utilised in the initialisation of the database session, associated with the variable async_session, in which the previously initiated engine is passed alongside other configuration indicators, allowing for the sessions use throughout the application.

Finally, the asynchronous function init_db is created, with its interior code beginning the connection with the database directly (through async with engine.begin() as conn:) then running the attributed async run_sync function and passing in the previously defined Base class, choosing to create all tables. Providing a function able to be ran on application startup, ensuring the database is established prior to queries.

With the database schema and initialisation defined above complete, the file below will reference and use this – showcasing an asynchronous API endpoint, written in Sanic, for retrieving user data and integrating SQLAlchemy sessions with the previously chosen web framework.

"" sanc_app.py ""

from sanic import Sanic, json

from sqlalchemy.future import select

from database_init import init_db, async_session

app = Sanic("ExampleApp")

# Run DB initialisation on app startup

@app.before_server_start

async def setup_db(app, loop):

await init_db()

async with async_session() as session:

# Example data

if not await session.scalar(select(User).where(User.id == 1)):

session.add_all([

User(name="Alice", email="alice@example.com", role="Manager"),

User(name="Bob", email="bob@example.com", role="Staff")

])

await session.commit()

@app.get("/user/<user_id:int>")

async def get_user(request, user_id):

async with async_session() as session:

query = select(User).where(User.id == user_id)

result = await session.execute(query)

user = result.scalar_one_or_none()

if not user:

return json({"error": "User not found"}, status=404)

return json({

"id": user.id,

"name": user.name,

"email": user.email,

"role": user.role,

"hours_worked": user.hours_worked

})

if __name__ == "__main__":

app.run(host="localhost", port=8000, dev=True)

Similarly to the programmed implementation presented in Prototype 1, this example is built upon Sanic and is a functional but minimal example of the capabilities of the identified technologies.

Initially, the example imports various methods required for the initialisation of the Sanic server – identically to Prototype 1 – with the only addition being from database_init import init_db, async_session, importing the database initialization function and session state from the previous file.

The previously implemented and newly imported function async_session is executed on server startup, through the @app.before_server_start decorator, attributing the function setup_db to the be bran on server startup. This function, while calling the initial setup of the database from database_init.py then proceeds to fill the database with example data, as can be seen by the session.add_all function containing column data entries - User(name="Alice", email="alice@example.com", role="Manager") – with the required fields filled. This allows the example implementation to function, giving the database data to query.

Following this a route is declared, this is done in the same way as the prior implementation in Prototype 1, with the addition of a route variable – allowing the passing of a user ID into the API call, to get the data associated with a specific user. This is seen through the use of the greater-than and less-than signs in the route definition ("/user/<user_id:int>"), encapsulating the assignment of the data to a variable (in this case called user_id) and its datatype – an integer in this instance. This variable is then added to the function declaration through its attributes - async def get_user(request, user_id): - ready to be injected into the function at runtime alongside the request data by Sanic.

Within the endpoint, an asynchronous session is created using async with async_session() as session. The SQLAlchemy select function constructs a query to retrieve a User object matching the requested user_id, which is executed asynchronously via await session.execute(query). The scalar_one_or_none function is then used to fetch either a single matching result or return None if no user exists, enforcing strict retrieval behaviour and reducing potential errors from unexpected results.

Conditional logic, in the form of an if statement, follows this to handle the case of the scalar_one_or_none function returning false, indicating the lack of a user with the provided id. If this is the case, the endpoint responds with a JSON error message and a 404 status code, demonstrating clear error handling in the API. If the query is successful, the user data is returned in a JSON object, showcasing both the ORM and Sanic’s capabilities in following RESTful practices, mapping the returned SQLAlchemy model attributes to JSON keys. Exposing the relational database’s content in a conditional and structured format, validating the capabilities of both the relational database and the ORM.

Overall, this prototype validates the suitability of SQLAlchemy through its tight integration with Sanic and as a result proves it to be ideal for use in Integrated Workforce Management Platform. The implementation demonstrates that structured relational data can be defined, initialised, and queried asynchronously without introducing unnecessary complexity. Furthermore, the successful integration between Sanic’s asynchronous request handling and SQLAlchemy’s asynchronous ORM layer confirms that the chosen technologies work in unison in a backend environment. The ability to safely initialise the database on application startup, seed data, and perform queries through RESTful endpoints proves that this architecture is both robust and extensible. As a result, this prototype not only confirms possible compatibility, but also proves that the chosen database and ORM approach is an effective solution for the management of sensitive relational data within Integrated Workforce Management Platform.

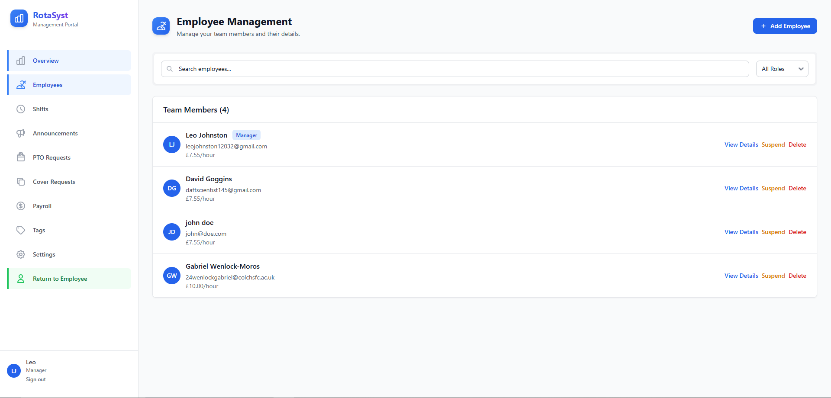

Iterative Development

Throughout the creation and programmed implementation of Integrated Workforce Management Platform, the process and progress in its development will be documented through a series of implementations – building upon previous iterations to create a result of a fully functional project. These iterations will illustrate the stages in which each MOSCOW MUST requirement is covered, directly proving the end result produces a project that is compliant with my predefined requirements, with the hopeful inclusion of COULD and SHOULD requirements in the final project if constraints permit.

Iteration 1: M14 – Database Initialisation, Model and Table creation

To start the development of Integrated Workforce Management Platform, the first core feature I chose to develop was the database due to its role as a foundation for the entire system. Using the technology, I evaluated and chose in the technical prototyping stage, SQLAlchemy, I proceeded to initialise the database.

Firstly, I needed to install the external library, SQLAlchemy, to use throughout the project. To do this, I made use of the package manager that is deployed alongside many Python installers (PIP) and ran pip install SQLAlchemy on the system in which my programming was due to begin. This use of a package manager ensured that I received the most recent publication of said library, enabling me to make full use of it.

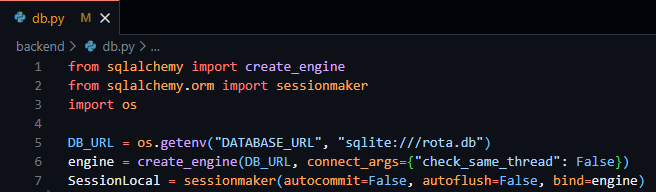

In starting to make use of this library and to initialise the database, a project directory was required to be built out. Inside the root of the project, I created two sub-directories, Frontend and Backend – allowing me to further solidify the abstraction of the two separate aspects of the project. Following this, inside the Backend directory I created an app.py, while empty this file enabled me to plan the structure of the project from the beginning. I then proceeded to create a file called db.py – in which I was finally able to start database initiation. Inside this file, I proceeded to import the required classes and functions provided by SQLAlchemy that were necessary to facilitate the initialisation of the database, the same code that was demonstrated in the technical prototyping phase when testing the validity of SQLAlchemy for the project. This code, as seen in figure 14 below, imports both the create_engine function and the sessionmaker class and uses them in the creation of the database, passing in the database URL as fetched through environment variables or as seen to default to “sqlite:///rota.db”.

Figure 14 - db.py file showing the creation of the database engine and local session of the database.

This file enables the fundamental access to required database variables throughout the project through the simple importation of the db.py file.

As a result of the foundation of the database access having been created, I then moved on to the creation and design of the database tables required for Integrated Workforce Management Platform. These database tables, implemented through SQLAlchemy models follow the exact tables outlined in the external storage section above, following the same field names, validations and data types.

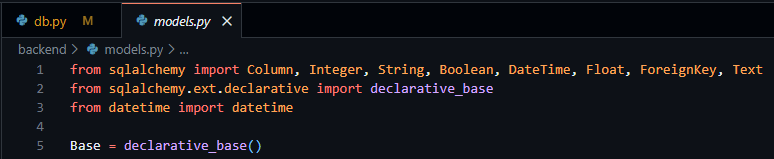

In starting to create these tables, I created a new file, models.py, enabling me to keep my database models separate from other parts of the code. To create the basis of this file prior to the creation of these tables/models I was required to import all the datatypes I would need (as outlined in the external storage section) and the base derivative class – both provided by SQLAlchemy. I then proceeded to create a variable called Base to easily access the declarative base class required to inform SQLAlchemy that my classes were to be converted to SQL tables, as seen below in Figure 15.

Figure 15 - models.py file showing the importation of necessary data types and declarative base from SQLAlchemy.

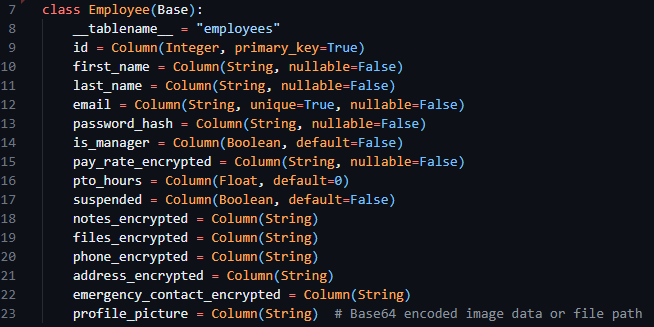

After this, I proceeded to convert the table designs from the external storage design section into SQLAlchemy compatible classes to be turned into database tables. The overall process consisted of the creation of a new child class with a descriptive title and the inclusion of the Base variable in the class declaration. Following this, in the body of the class a __tablename__ variable was required to be set, indicating to SQLAlchemy the exact table name to set. Then finally, using the data classes provided by SQLAlchemy I was able to create new variables inside the class consisting of the field name (the variable declaration) and the datatype (as the variable definition) with any associated configuration options being available through the parameters of the datatype itself. I then went on to implement this for every table I outlined in the previous sections, below the Employee class can be seen in figure 16.

Figure 16 - The Employee model, from models.py file.

I then imported the SessionLocal and all database models into my app.py file, enabling the database to initialise alongside the future code to be implemented in app.py.

Testing

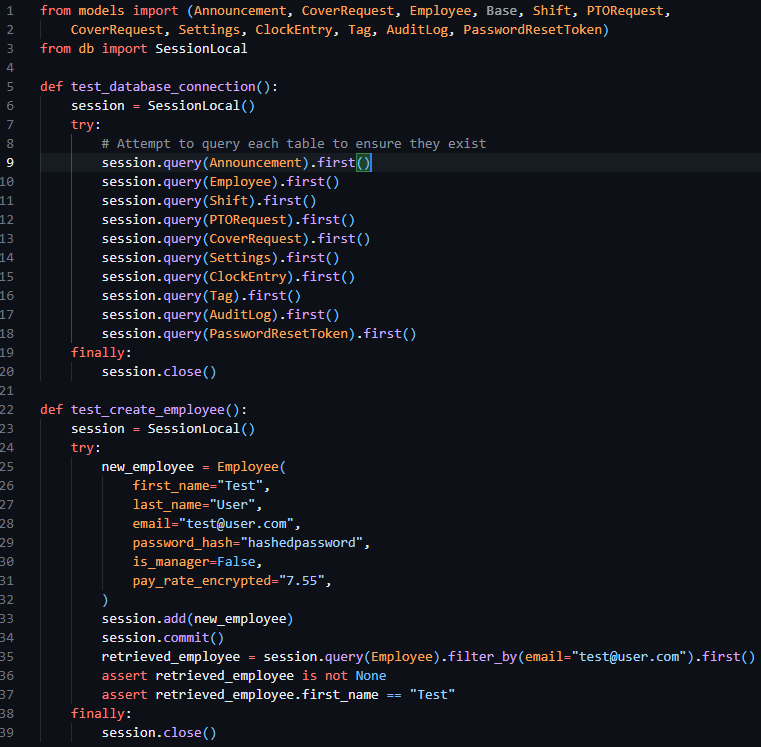

To test this iteration, I ran the app.py file verifying the creation of the database tables and checking to make sure they were composed as expected. Then proceeded to add some placeholder data into the table and verify that insertion and selection operations to the table occurred as expected. The code to test this implementation can be seen below in figure 17.

Figure 17 - app.py file containing tests to check the creation of the tables and the creation of mock data.

Iteration 2: M1, M15, M18 – Authentication: Login

This second iteration aims to fulfil the implementation of MUST requirement 1, through the implementation of secure login and session handling, enforcing authentication on routes and laying the groundwork for permission-based access in future iterations.

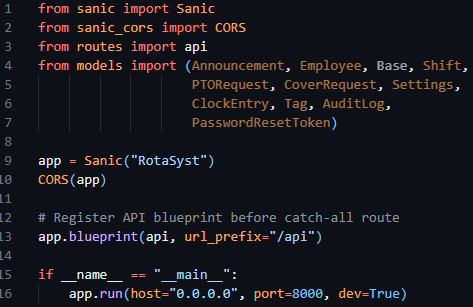

I started this iteration by laying the groundwork for the implementation of routes, as this was an essential prerequisite to authentication. As per previous technical research prototypes, I initialised the Sanic application in the app.py file, creating the Sanic application and enabling the running of the server through app.run. I then proceeded to make use of the sanic_cors library, which was included with the install of Sanic itself, allowing me to add CORS rules to the Sanic application. Following this, I then registered a Sanic blueprint, allowing me to define and contain my API routes in a file separate to app.py, increasing modularity and separation of concerns. This can be seen below in figure 18.

Figure 18 - App.py file initialising the Sanic application, starting the server, configuring CORS and registering the routes blueprint.

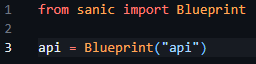

As a result of my implementation of the blueprint, a routes.py file had to be created, containing the base code to create said blueprint and enabling the import and reference to the blueprint called API in app.py to function correctly. This can be seen below in figure 19.

Figure 19 - Creation and initilisation of the route blueprint in routes.py

Now my initialisation of the Sanic app and creation of route handlers had been finalised, I moved on to creating helper functions and decorators to produce the desired requirements and aims previously outlined.

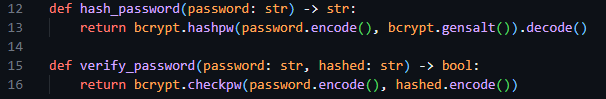

In keeping with my requirement of separation of concerns, I chose to separate the authentication management system from the routes themselves and created a new auth.py file and imported this in my routes.py file, enabling it for subsequent use in this prototype. In the auth.py file, I initially created two helper functions, hash_password and verify_password, enabling easy access to hash functionality. These functions made use of hashing algorithms provided by bycrypt, and through their implementation inside these functions bundled configuration options automatically – reducing the chances of issues arising of mismatched hash configurations.

Figure 20 - Snippet of the hashing helper functions previously mentioned.

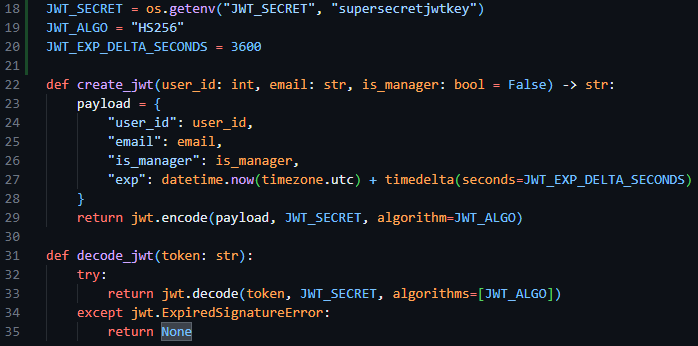

I then proceeded to create two further helper functions for use in this file that enable the encoding and decoding of session JWT tokens, create_jwt and decode_jwt. Allowing me to standardise the creation of my sessions. When creating these functions, I declared three constant variables to define the JWT secret (used to encode the data), the JWT algorithm (the type of encoding I required) and the session expiry – with 3600 seconds and HS256 being the respective session expiry time and encoding algorithm.

Figure 21 - Snippet of code showing the JWT encoding and decoding algorithms and their constant variables.

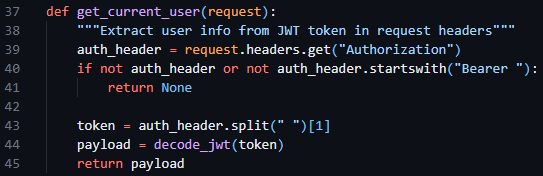

Following this, I then created a get_current_user helper function, enabling me to fetch the current user info from the request headers, making use of the decode_jwt function.

Figure 22 - The get_current_user function in auth.py requesting the session token from the request headers and decoding it.

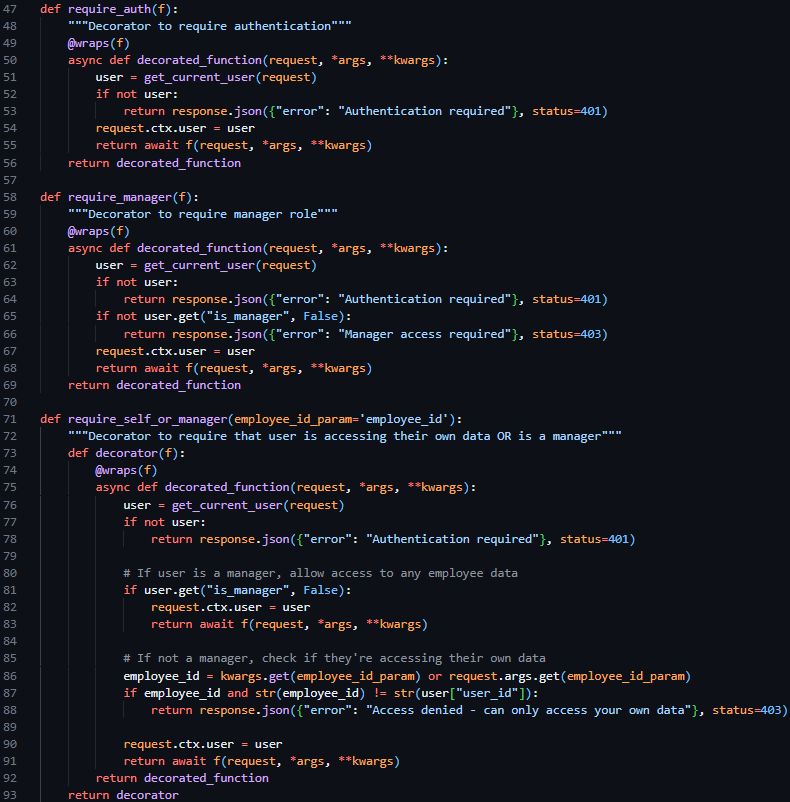

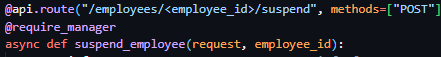

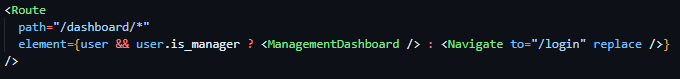

I then proceeded to create three decorator functions to be placed above route declarations in the route.py file, @require_auth, @require_manager and @require_self_or_manager. The first restricting access to the route if a valid session token is not included in the request, the second restricting access to the route if the user does not have managerial permissions, and the only allowing access to the route if the user is a manager or if the user if requesting their own data. These three decorator functions, which were able to be used in conjunction with each other, enable the easy restriction of HTTP routes per predefined cases – reducing the amount of repeated code needed.

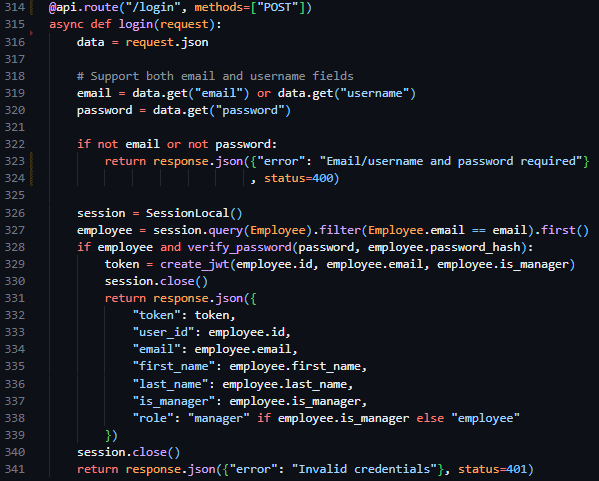

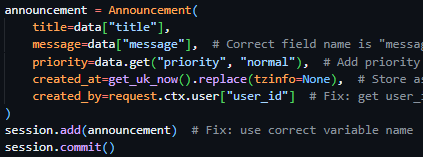

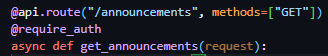

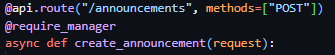

After the creation of these base authentication features, I proceeded to the core implementation of a Login endpoint, which aimed to take in an email and password in the request body, verify the email and password against predefined security and data type standards and return either a success response and the JWT session token or a failure response.

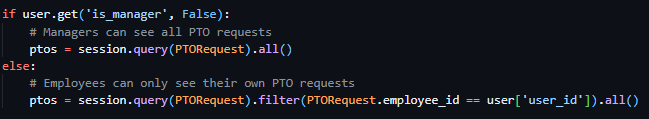

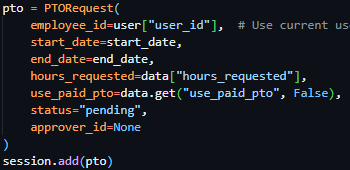

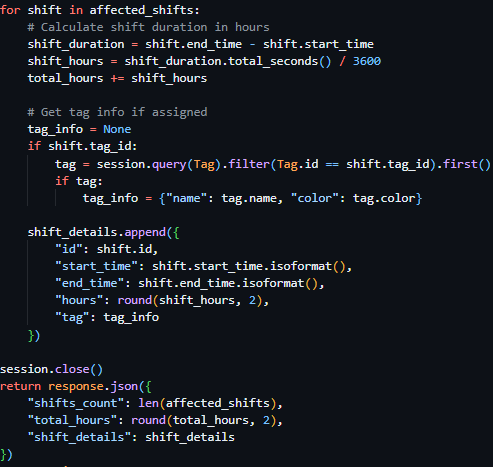

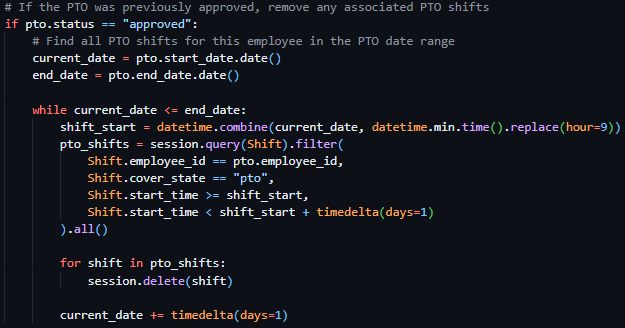

Therefore, to implement this route, I created a new route handler in routes.py (only accepting POST requests), initialising the route similarly to the way in which I had done in technical prototyping, but rather than be derived from the app class – derived from the api blueprint we defined previously. I began by converting the body of the request into JSON format, then verifying the presence of an email and password. Proceeding this I initiated an instance of the previously created database session, querying the users email against the Employee table, and returning Invalid Credentials if the query fails to return user data. Then, once the user is deemed to exist, and the provided password has been compared to the hash stored in the database using the verify_password function, I make use of the create_jwt function, close the database session and return the newly created session token and associated user data through a JSON response.

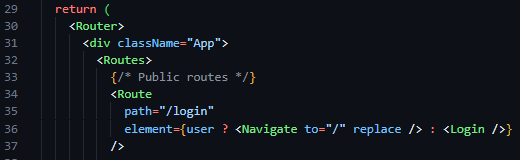

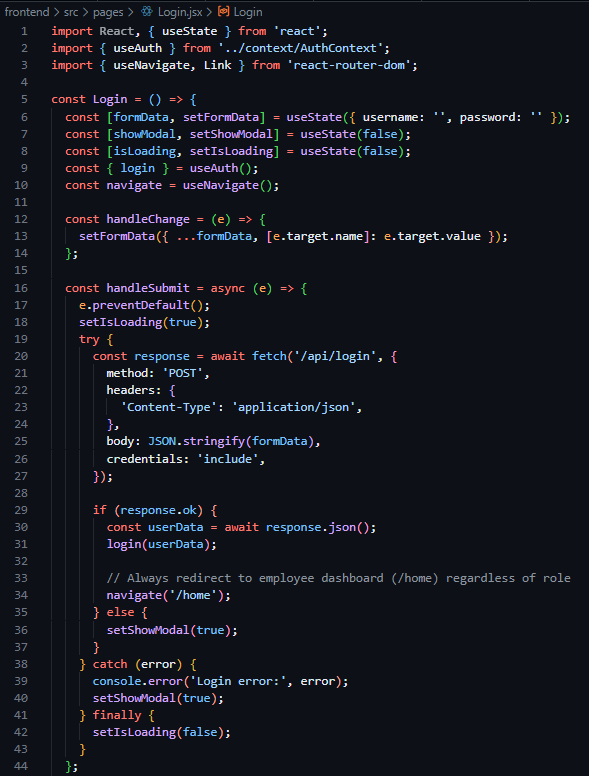

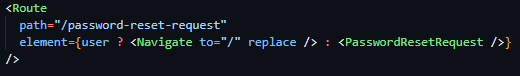

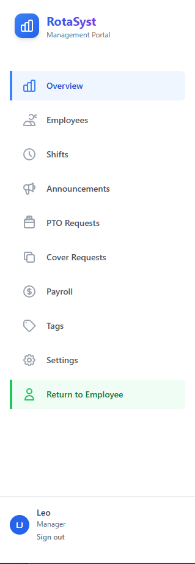

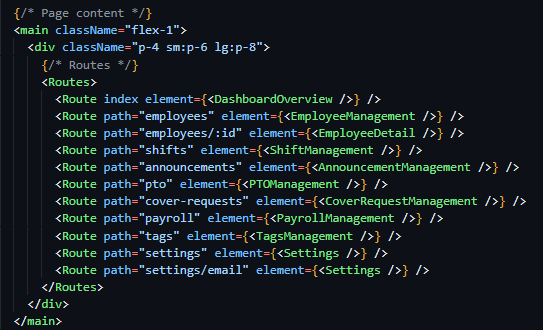

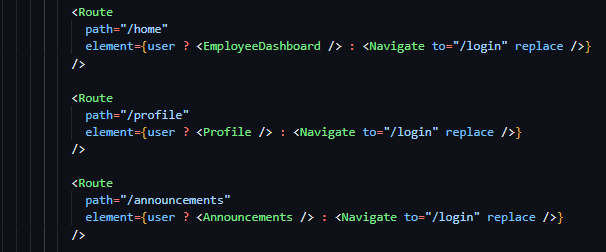

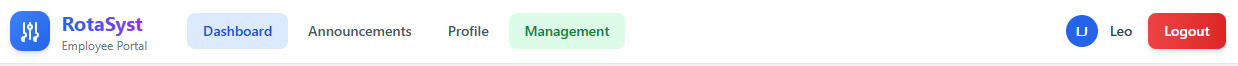

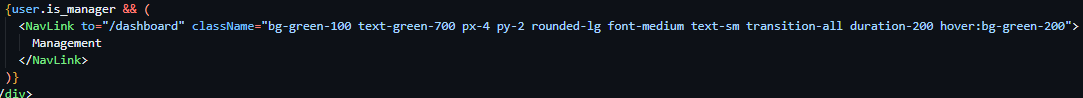

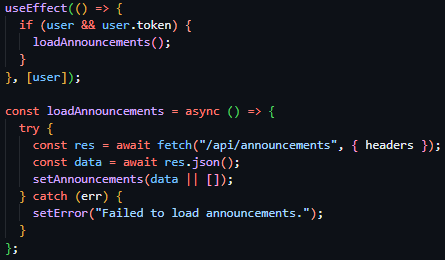

As a result of the completion of the backend routes required to facilitate authentication functionality, I then moved on to the implementation of the login page on the frontend side of the project. Aiming to follow the structure of the designs outlined in the screen design section, I initialised a new React project and installed react-router-dom, a library that builds on the capabilities of React, and Tailwind CSS, an easy-to-use wrapper over CSS – allowing for styles to be implemented inline with HTML tags. I then initiated the react router in the App.jsx file in the newly populated frontend directory (files auto populated by the react setup process), and defined my initial route ‘/login’, and linked it to a newly created Login component in the newly created pages directory.

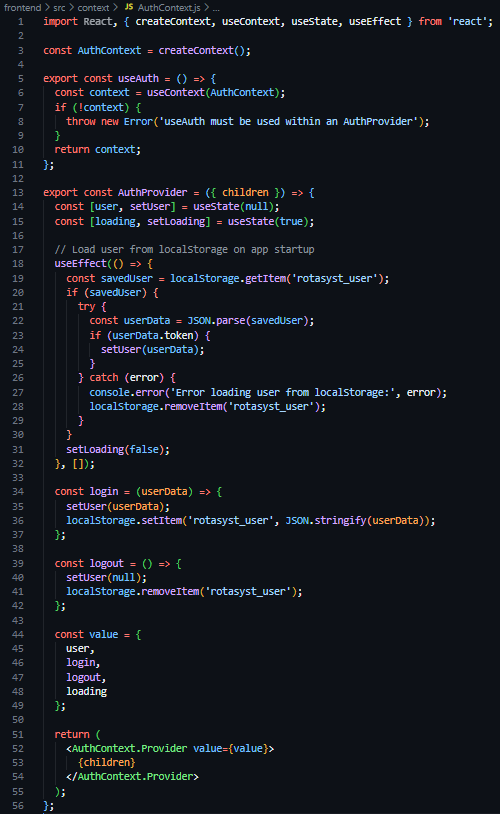

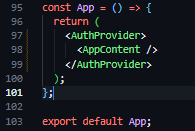

As a result of these frontend structural developments, I proceeded to then create a new directory in the frontend section of the project called context, containing a new file called AuthContext.js – a file to store, define and manage the authentication and state of users throughout the application. In this file I then defined a new constant of AuthContext, a derivative of the React provided createContext function, created and exported a useAuth function, and again created and exported a AuthProvider component. This AuthProvider component, which was wrapped around the element tree of the application (see Figure 27), loads the user from its session token saved in LocalStorage on page load, stores the state of the currently logged in user in useState hooks, and provides helper functions (login and logout) to facilitate the destruction and creation of LocalStorage values.

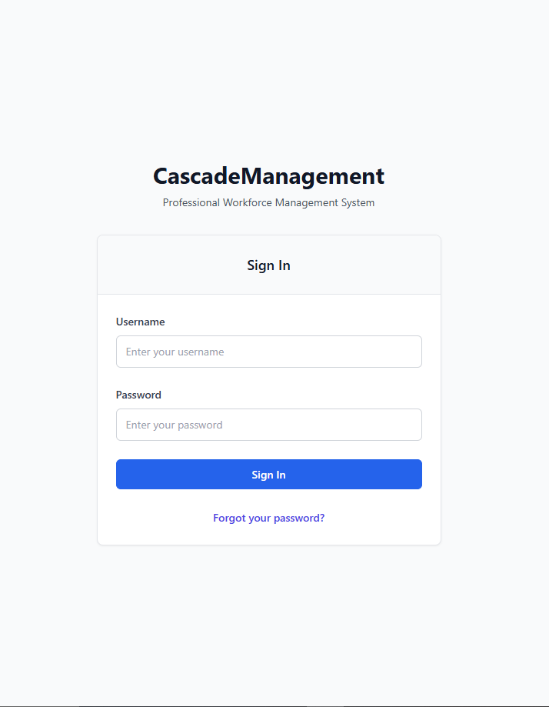

Moving back to the Login component (located at pages/Login.jsx), the next task was to implement the UI of the login page. I started by creating a creating an invisable box that flexed to the size of its contents with internal padding, then filled this with two horizontally centered items an h1 title stating ‘Integrated Workforce Management Platform’, and a p tagline stating ‘Professional Workforce Management System’.

Following this, I then created a white card with outline shadow (see figure 30), with a title of ‘Sign In’ in large text. After this, I created the HTML form, and a placeholder function called handleSubmit in its onSubmit attribute, with a username text field and a password password field, both including a callback function handleChange in their onChange attributes. Below these, I added a blue button with the text ’Login’ and a blue ‘Forgot Your Password’ hyperlink. You can see these changes aside in figure 31.

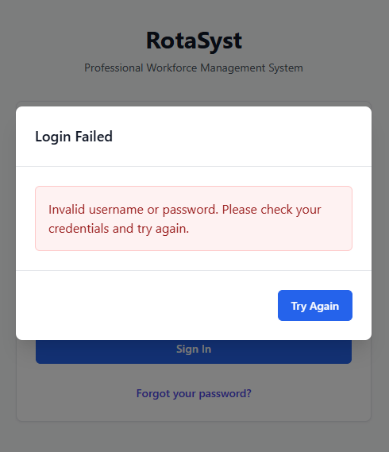

Following this completion of the implementation of the UI design, the functionality behind the page was required to be implemented. Beginning with the creation of these event related functions, I created a handle data change function that stores the new field data in a useState hook called formData in a key value pair – with the field name as the key and the new content as the value. This allowed me to have stored copies of the field data to use when creating the handleSubmit function, which after preventing the default action of HTML forms, set a loading state (indicating to the button content to convert to an animated spinner) and dispatch an asyncronous POST request using the native JavaScript fetch API to the ‘/api/login’ backend endpoint I recently created, populating the body with a string version of the key-value store that is formData. Following this, I added an if clause, checking if the response was successful, in which case it would make use of the previously created useAuth custom hook and save the provided data to global state via the login function assosiated with it, then subsequently perform a redirect to ‘/home’. And in the case of an error arrising, a modal would be revealed, stating ‘Invalid username or password. Please check your credentials and try again.’ In large text with a blue button below with the text ‘Try Again’.

Testing

To test this iteration I created various situations in which I could predict the excpected functionality of the system and record the outcome.

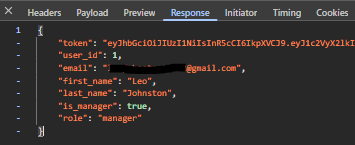

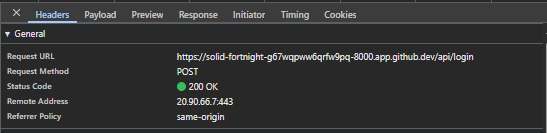

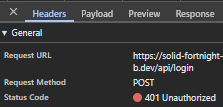

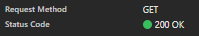

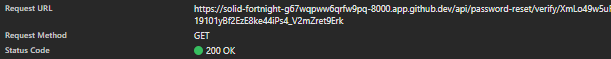

Firstly, I provided the login page with the credentials that were present in the Employee database. I excepted a successful request presenting the HTTP status code of 200, the recieval of the data assosiated with the user, the recieval of a session JWT token and a UI redirection to ‘/home’. The Figures below (33 & 34) capture both the network response and resultant data provided by the API, proving the system to function as intended.

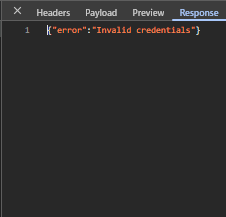

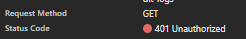

Following this, the next test I shall produce will input invalid credentials into the login page, spesifically an incorrect password in the password field. This should produce a 401 error in the network tab with the response stating ‘Invalid Credentials’, and the UI should reveal an error modal. The three figures below (35, 36 & 37) show evidence of the network response, response body and UI response – showcasing the system functions as intended.

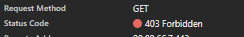

For the final test, I shall create a mockup protected route making use of the @require_manager decorator I previously created. This route shall be tested in three ways, when lacking a token, when possessing only an employee token and when possessing a manager token. The expected behaviour is as follows: when lacking a session token, the route should respond with a 401 status code, when possessing a session token without managerial permissions the route should respond with a 403 status code and finally when possessing a session token with managerial permissions the route should respond with a 200 status code. The three figures below, (38, 39 & 40) show evidence of these network responses for the various situations and directly prove the system functioning as intended during development.

Iteration 3: M1, M19, M15, M18 – Authentication: Password Reset

The goal of this iteration is to build upon the previously started authentication system by building the functionality behind the ‘Forgotten your password?’ button on the Login screen. Delivering secure password reset functionality through expiring one time token-based links sent to the provided email address.

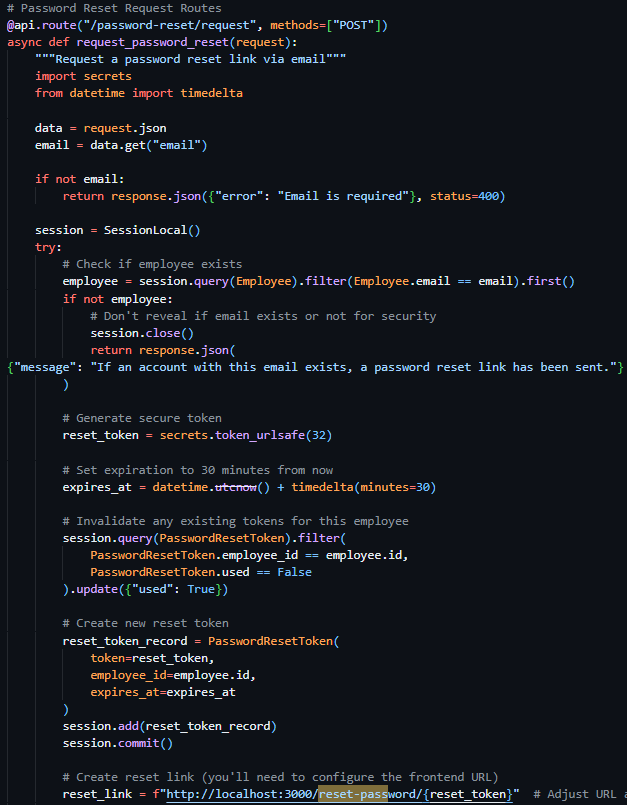

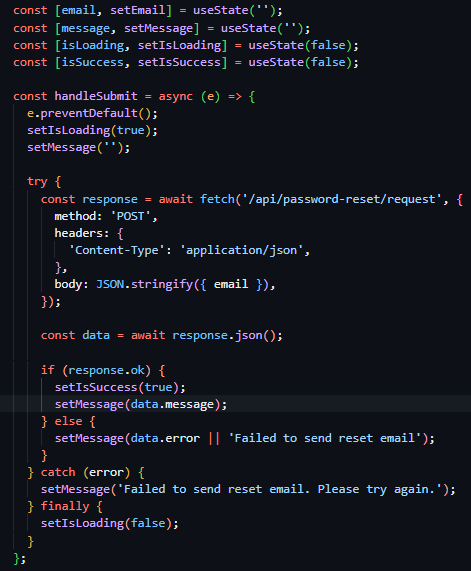

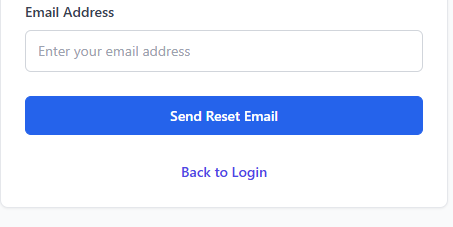

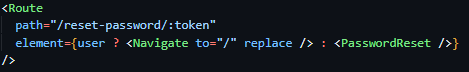

Due to the ResetPasswordToken model and table already existing due to implementation in the initial iteration, the initial focus of this iteration was to create the routes required for this functionality to be accessible. I identified 3 main routes needed to implement this functionality: ‘/password-reset/request’, ‘/reset-password/<token>’, and ‘/password-reset/confirm/<token>’.

The first route (see Figure 41), a POST route in the routes.py file, requiring an email address in the body, initially queries the database Employees table to verify the existence of a user with the provided email address. If no user is found, the exact same success response is returned as if the action had successfully occurred – preventing hackers from identifying users through this medium. Once the validity of the user has been confirmed, I moved on to generating a secure and unique reset token – while there were many methods for this (including reusing existing JWT code for this purpose) – I wanted the assurance of a URL-safe token, and therefore chose to use the built-in Python library secrets and their token_urlsafe function, which allowed me to be assured that the token that was produced was compatible with my use case and to select the amount of bytes of the token itself. I then proceeded to invalidate any existing password reset tokens associated with the user through a filtered update query of the PasswordResetTokens, then subsequently created a new reset token record with the newly generated token, employee id and expiry time (the current time plus 30 minutes).

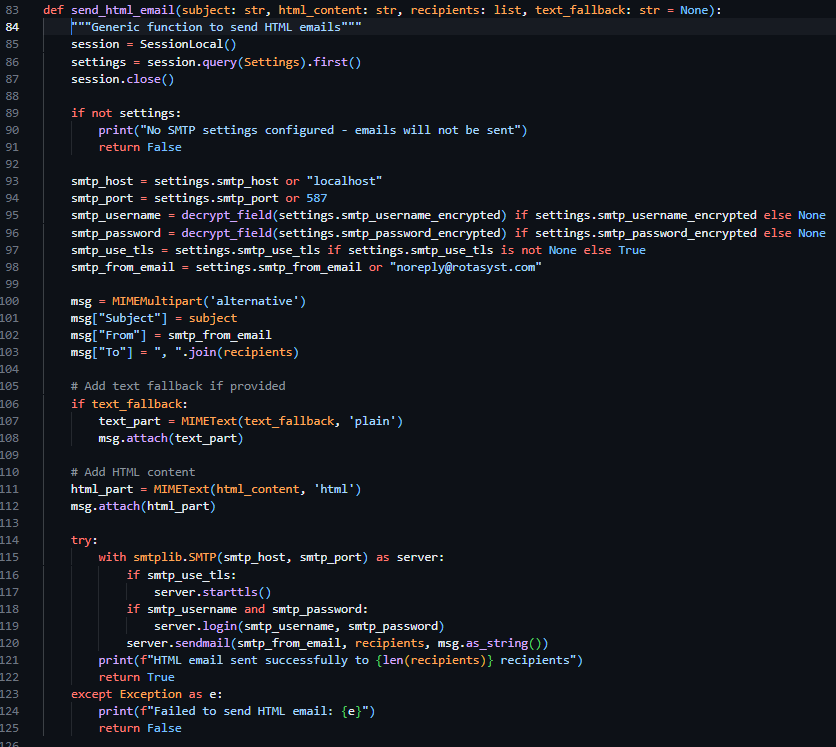

Following this I was required to present the token reset link to the user, through an email – and as a result decided to create a helper function to dispatch emails uniformly across the project making use of the SMTP credentials provided by the configurable settings system table.

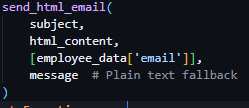

In the same file, I created a new helper function called send_html_email, which utilizes the built in email library in Python, specifically email.mime.multipart.MIMEMultipart, which enabled me – after I queried the Settings table for SMTP settings and printed a console error if these values were unset – to directly form the email from the provided parameters (subject, html_content, recipients, text_fallback) and dispatch the email according to the settings saved in the Settings table of the database.

Due to this function lacking the functionality to directly generate email content, I was required to store and generate these emails myself – and as a result I created a new file called email_templates.py and created a function called create_password_reset_request_email, requiring employee name, reset link and expires in as parameters. I then proceeded to create the body of this email using pure HTML and CCS then placed my code into https://jam.dev/utilities/css-inliner-for-email, a tool to convert typical HTML and CCS into compatible email versions. I then placed the newly in lined HTML content into the return statement of the function and used f-strings to place my function parameters in the string itself.

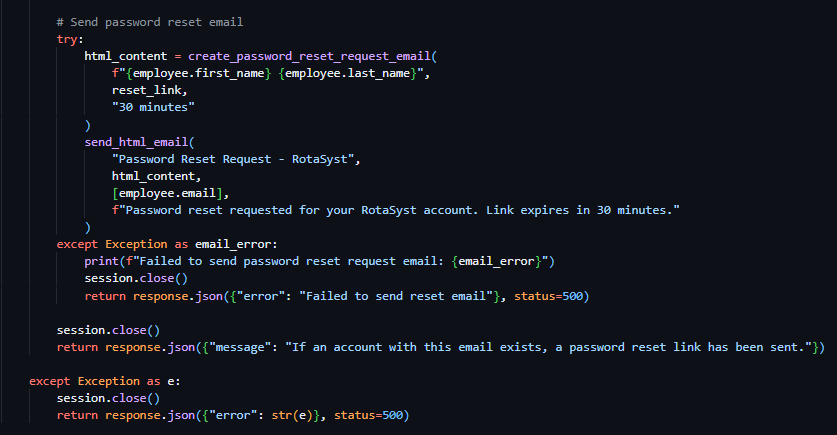

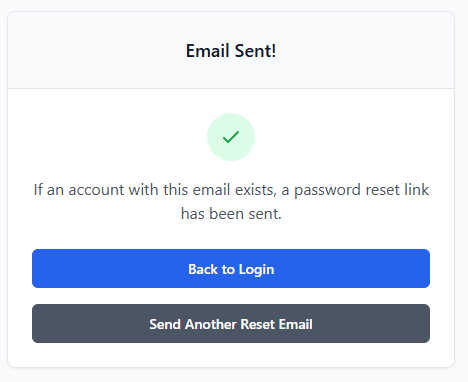

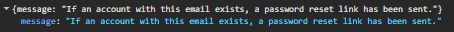

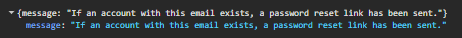

As a result of both helper functions being completed, I continued with the development of the password reset request route. I proceeded to make use of the send_html_email function and included the text "Password Reset Request - RotaSyst" as the email subject, the employee email as the recipient email address and the text "Password reset requested for your RotaSyst account. Link expires in 30 minutes." As the fallback text. I then created a variable called html_content and associated it with the invocation of the create_password_reset_request_email function, with the associated appropriate parameters. I then moved on to the sending of a success 200 response – with the message “If an account with this email exists, a password reset link has been sent”. When deploying the email to send the user the reset password URL, my first implementation failed my test dispatch – this was due to the SMTP settings not being configured in the database, I then proceeded to realise that this error was not conveyed to the user in any way so I modified the email delivery code and placed it in a try clause – tasked to catch all exceptions occurring during the building of the email body and its dispatch. I then placed an error response below the except portion of the try clause – enabling communication with the user about the email delivery failure (as seen in Figure 43).

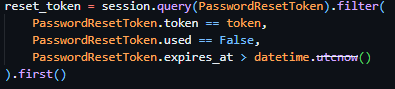

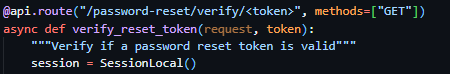

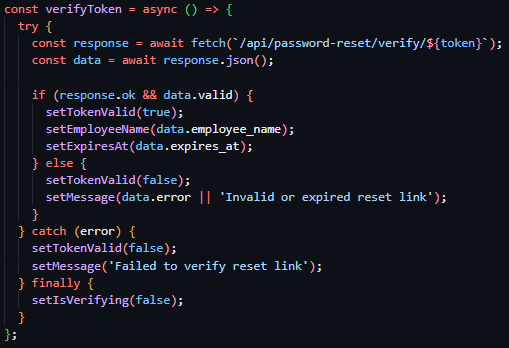

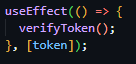

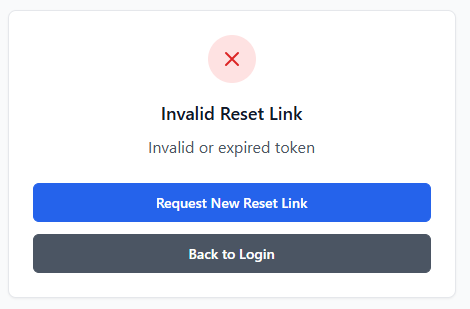

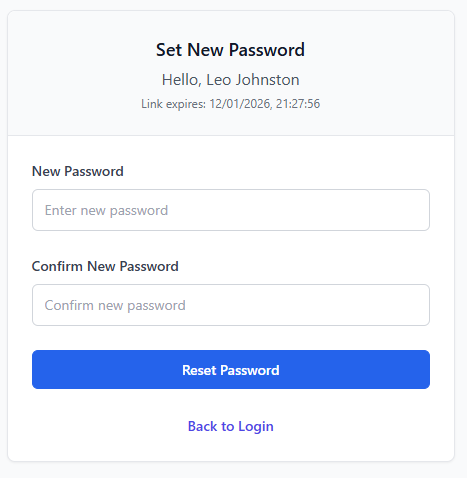

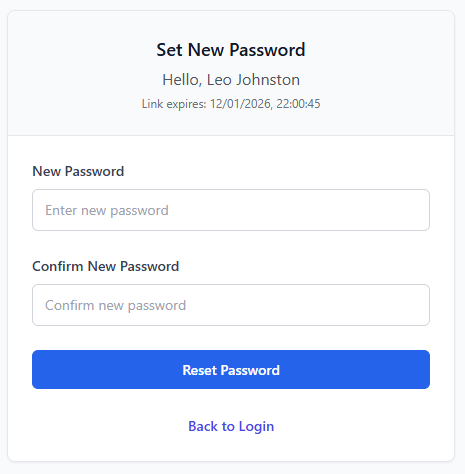

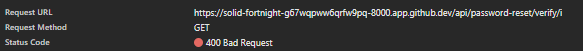

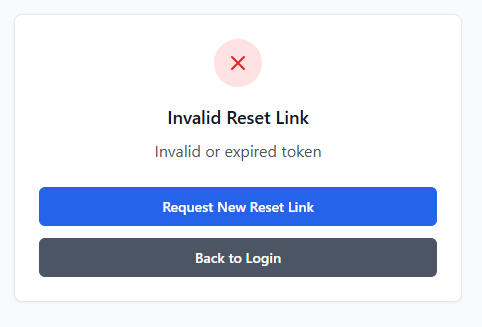

With the successful implementation of this route, I continued to the implementation of the token verification route – a route that should accept GET requests with the token as a part of its URL to check its validity prior to password reset submission. I proceeded to initialise this route through the same method as previous routes but included <token> in the URL – allowing me to access the URL content provided in this spot in the route handler, a method previously covered by the technical prototyping. I then proceeded to dispatch a filtered query to PasswordResetToken table, with the token provided as the search term and the conditions of PasswordResetToken.used being False and PasswordResetToken.expires_at being bigger than the current datetime object (see Figure 45). If this query returns nothing, an error response will be sent to the client detailing the error (“Invalid or expired token”).

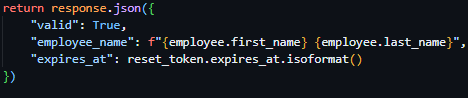

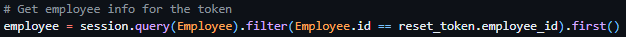

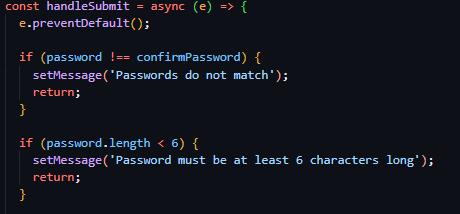

Proceeding this, the route then queries the Employee table, gathering further information on the employee via the employee ID provided by the reset tokens payload. And subsequently returns a successful JSON response containing a validity key, employee name key and a expiry time key.

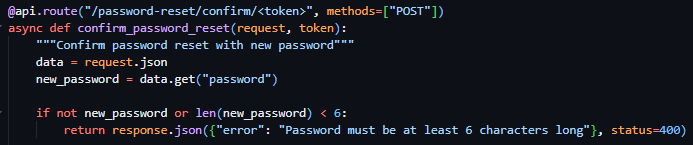

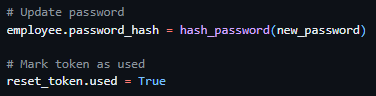

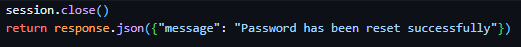

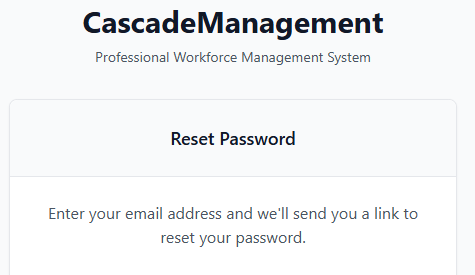

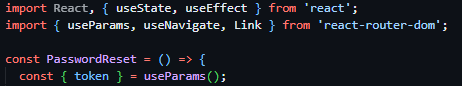

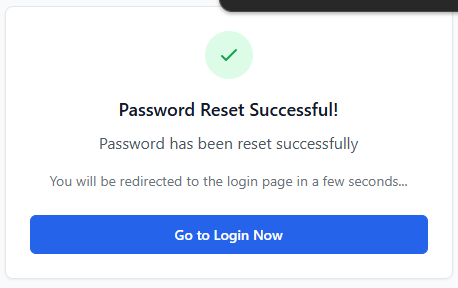

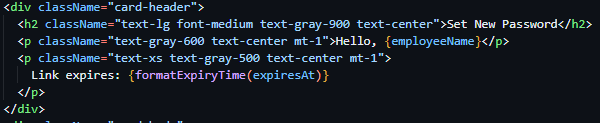

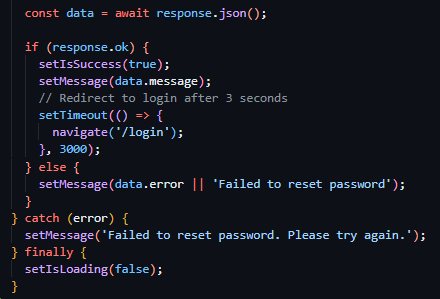

Following this, I moved on to the creation of the password reset confirmation route which required the inclusion of the reset token in the URL parameters once again. As well as the reset token, this route required the inclusion of the body parameter new password, which I validated to be present and to be over 6 characters (see figure 47). I then subsequently used the same method as the previously developed route to verify the tokens validity and presence and to gather the data associated with the user ID provided by the tokens payload and responded with the same error messages on the failure of these checks. I then proceeded to immediately hash the password provided by the request body and update it in the Employee table record associated with the user and marked the token as used (see figure 48). I then ended the request with a successful JSON response as seen in Figure 49.